If the cache is only small (as in the default 2 MB (-2000)) then if the same page is modified again after having been written out from the cache, then it will be re-read and re-written. The expression means a bloody big cache - in that case it was about 4 GB which is large enough to hold all the pages in the cache so they were never written until the end. This means that if there are more than 2 MB of dirty pages in the cache they get flushed to disk. The default -2000 means about 2 Megabytes. Default in sqlite3 shell (ver 3.33) is "-2000", what does minus sign mean? I use the default. Large? The numbers from your test indicate that ordering for such cache is irrelevant. You mention Queen Marry II cache, but I do not know what this means. You can also use the column-name in place of the column-ordinal (usually). "ORDER BY 7, 3, 1, 8" would mean order by column 7 and within that by column 3 and within that by column 1 and within that by column 8. "ORDER BY 1" means to order by the first result column in the select. I am not sure if my CSV files are ordered already, but I can certainly try. I suspect, you mean that records are faster to insert if the insert happens in the order of the PRIMARY KEY. I do not exactly understand what "in order" means, In your example, I do not know what "ORDER BY 1" does.

Note that I have reduced the cache to itty-bitty (which is the default) since this will have an devastatingly huge impact on the amount of I/O performed (I have a cruise-ship-load of RAM for caching, so without this the difference is less noticeable).Įven with the sort (order by clause) the in-order insertion is more than 10 times faster than the out-of-order insertion. In the second case the insertion is "in-order" so the B-Tree is always built in-order with a minimum of rebalancing as the insert progresses. In the first case the insertion is in "random" order, meaning that the index structures must be continually updated and the B-Tree rebalanced as the insert progresses. Sqlite> insert into x select random() from wholenumber where value between 0000 order by 1 Sqlite> insert into x select random() from wholenumber where value between 0000 Sqlite> create table x(x text primary key not null) This is extremely faked, but notice the difference between in-order insertion and out-of-order insertion: >sqlite sample.db In my case PRIMARY KEY is a string (used to be 38 digit integer, too large for SQLite as I understand). Maybe my two-step process is wrong and there is a better way? I know PRAGMA ignore_check_constraints could turn off CHECK. If I am correct, is there a way to disable or otherwise speed up the second step? I know FOREIGN KEY constrain can be turned off (and I keep it off). Plus, I know there is PRAGMA integrity_check that can be used to verify at the end after everything is inserted. Since I know the data source is a database, I do not need to enforce the check on the fly. I suspect but not sure the slowdown is caused by enforcing uniqueness of the PRIMARY KEY. The second step is slow and it gets slower as database size grows. Create table according to schema with PRIMAMRY KEY and other constraints and INSERT from QQQ into the final destination.

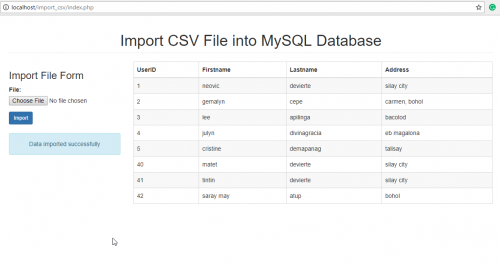

This makes all columns TEXT since no type is available. import of CSV file into a table QQQ that will be deleted once all finished. I do this in two steps (all within sqlite3 shell): I therefore have to re-create schema, clean up (NULL versus empty string, convert to real, etc). These are dumps from existing database (unfortunately). I have large CSV files (30GB, ~100M records ).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed